Responsible AI

Understand AI decisions before it takes decisions for your customers

“Responsible AI (RAI) is the only way to mitigate AI risks. Future payoffs will give early adopters an edge that competitors may never be able to overtake.”

“Earn and keep your customers' trust. Lack of trust in AI systems is a growing barrier to adoption in enterprises with more organizations selecting enterprise products based on AI commitments and practices. A responsible AI approach earns trust.“

“To create trust in AI, organizations must move beyond defining Responsible AI principles and put those principles into practice. To build trust among employees and customers, develop explainable AI that is transparent across processes and functions.”

77%

of global customers think organizations must be held accountable for their misuse of AI

Accenture’s 2022 Tech Vision research

85%

of AI projects will deliver erroneous outcomes through 2022 due to bias in data, algorithms or the teams responsible for managing them

Gartner report

58%

identify AI as the biggest potential cause of unintended consequences. Only a handful are fully capable of assessing AI-adoption risks

Global survey of risk managers

What is

Responsible AI?

Responsible AI (RAI) is a proven way to remedy unreliable models that silently fail over time and impact the customers’ experience with faulty decisions.

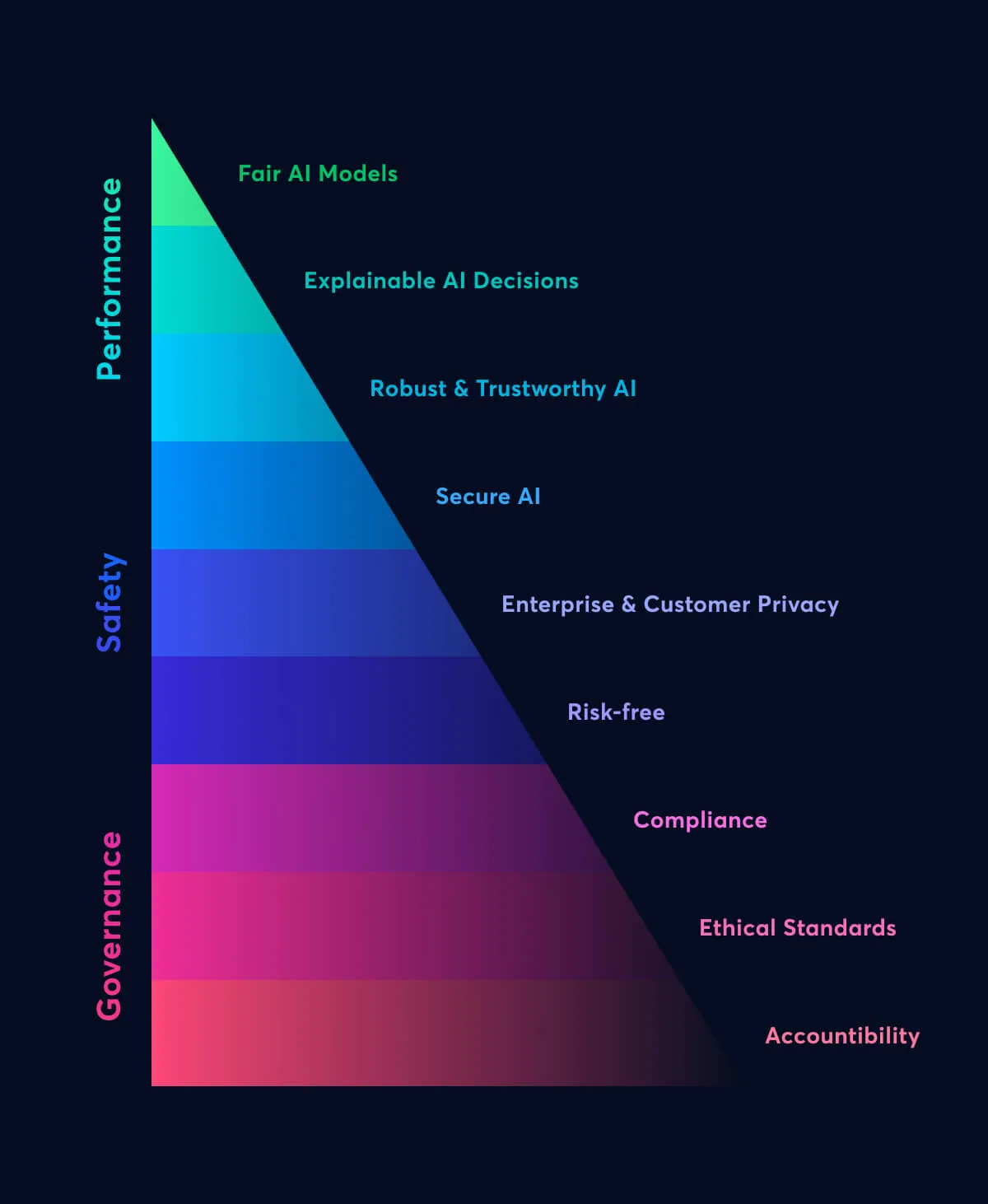

AI-led decisions need to be evaluated on different fronts like business risks, safety issues, data health, and equality. RAI enables concrete AI decisions that are free from biases and risks, leading to higher customer satisfaction over time.

With RAI, teams develop, deploy, and scale AI for constructive causes and good intentions to impact people and society positively. It nurtures people’s trust and confidence in AI by transforming AI applications into more accountable, ethical, and transparent systems.

Find out with Responsible AI

Is AI supporting accurate decisions?

Is AI violating any policy or privacy?

Is there a way to monitor AI and its outcomes?

Responsible AI

The crux of trustworthiness

Localizes the root cause of biases and identifies issues such as sampling imbalance, data irregularities, and partial sources.

Provides complete transparency at global, regional, and local levels with a step-by-step view behind every model decision.

Implements risk identifiers such as drift, quality, and performance monitors to thoroughly track and trigger anomalous behavior.

Enables hierarchical accountability and partial controls to minimize risks and maintain a high-speed recovery environment.

Supports end-to-end security for data and model artifacts to ensure complete privacy for customers and the enterprise.

Our approach to Responsible AI

Censius is a Responsible AI partner for enterprises, helping AI/ML teams to swiftly cross the bridge from unreliable, biased, and risk-prone AI models to a scalable ecosystem of trustworthy AI solutions.

Censius offers an end-to-end AI observability platform that delivers automated monitoring and proactive troubleshooting to build reliable models.

Monitoring

- Continuously monitor performance, data quality, and model drifts

- Track for prediction, data, and concept drifts

- Real-time alerts for violations

Learn moreModel Health

- Track performance across model versions

- Ensure models are running correctly, even without ground-truth

- Compare a model's historical performance

Learn moreExplainaibility

- Understand the "why" behind model decisions

- Improve performance for specific cohorts

- Ensure that models stay compliant

Learn more

AI Observability Guide

A proven route to implement Responsible AI

Get your hands on our thorough AI Observability guide for the following takeaways

Top industry-wide practices

Critical novel concepts

Business impact of observability

What do you mean by Responsible AI?

Responsible AI (RAI) brings practices to develop, deploy, and scale AI for good causes that impact people and society fairly

Are We Ready For AI Explainability?

AI is scaling, yes. But are we ready to shift our focus on explainability?

Model Monitoring in Machine Learning - All You Need to Know

What can go wrong with models in production? What needs to be monitored and Why?